Just following on from the feedback from my last post about process v outcome in safety management, below if a short video I did a little while ago that explains some of the concepts further.

Enjoy.

Just following on from the feedback from my last post about process v outcome in safety management, below if a short video I did a little while ago that explains some of the concepts further.

Enjoy.

Today I was reading a LinkedIn post lamenting the state of health and safety management, evidenced by too many “safety stickers” on a piece of machinery.

A commenter noted that the situation was “absolute madness“, which doesn’t keep anyone safe. Much of the conversation from there was focused on whose “fault” it was and we ended up with all of the usual suspects in the firing line – insurers, lawyers, consultants and so on. Probably quite justified too.

To my mind, this issue illustrates the disconnect apparent in health and safety management between “process” and “outcome“. It seems to me that health and safety management is obsessed with process – the way that we “do” safety. This obsession means that every few years somebody reinvents the way we do safety, or the way we do parts of safety. As evidence of this you only need to think of the transition from safety culture, to safety 1, through to safety 2 and now safety differently – with god only knows what in between. On a micro level, just think how many iterations of the JHA you have seen during your working career.

What makes this more interesting is the process doesn’t really matter. How you “do” safety is not really an issue. What is important is whether you can show your process achieves the outcome it was designed for.

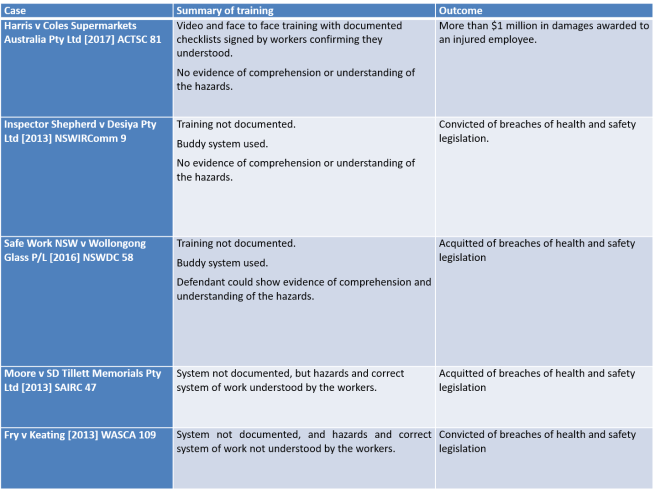

The table below lists a series of cases looking at the “outcome” of understanding hazards. The “processes” were all different: documented, undocumented, buddy systems, on-the-job training and so on. But even where the processes were the same this did not determine the decision – the decision was determined on whether the outcome, and understanding of hazards and risks, was achieved.

So, the question is not how fancy, new or shiny your process is. The question is whether it achieves the outcome.

It seems hardly a day goes by without social media raising a new discussion about the merits or otherwise of “Zero Harm”.

As I understand the various arguments “for” and “against”, there seemed to be three broad categories of argument (although I do not discount further or additional arguments).

One argument says that Zero Harm is not a target, or a goal, rather it is an aspiration – something to pursue. If I may be so bold as to paraphrase Prof Andrew Hopkins, it is like a state of grace – something to be striven for, but never truly achieved.

Another argument, more of a middle ground, articulates that Zero Harm is “okay”, but may have an unintended consequence of driving adverse behaviour. In particular, it is argued that Zero Harm causes individuals and organisations to hide incidents or manipulate injury data in support of an organisation’s “zero” targets.

Yet another argument says that the language of zero is totally corrosive and destructive. It argues the language of zero – amongst other things – primes a discourse that is anti-learning and anti-community (See, For the Love of Zero by Dr Robert Long).

I would like to use this article to discuss two matters. First, the Safety Paradox in the context of aspirational statements, only using “zero” as a starting example. Second, to demonstrate how aspirational statements can be used against organisations. Both these points are closely related but ultimately, I want to argue whatever your “aspirations” you need to have “assurance” about the effect they have on your business.

The Safety Paradox is a concept I have been exploring for some time now. The Safety Paradox supposes that our safety initiatives have within them the potential to improve safety and cause harm.

In my view, the single biggest weakness in modern safety management is the assumption that safety management initiatives are “good“. I have no doubt that the proponents of Zero Harm suffer from this assumption.

The question of whether Zero Harm is good or bad is, on one view, totally irrelevant. If you are a Zero Harm organisation the only thing that really matters is the impact Zero Harm has in your workplace.

My personal experience with Zero Harm means that I remain unconvinced of its benefits, but I do not feel I am closed to being persuaded otherwise, it is just that I have never worked with an organisation that has been able to address the three questions proposed above. Moreover, in my experience, there is usually a significant disconnect between corporate intentions and operational reality: What management think is going on is often very different from what the workforce believes.

Considering all the published criticism of Zero Harm as a concept, I do not think it is unfair that the onus should be on Zero Harm organisations – including government regulators – to demonstrate that Zero Harm achieves its intended purpose and does not have a negative impact on safety.

Now, this is more than a matter of semantics. Aspirational statements can, and are used against individuals and organisations.

On 21 August 2009 and uncontrolled release of hydrocarbons occurred on the West Atlas drilling rig operating off the North-West coast of Australia. The incident reawakened the Australian Public to the dangers of offshore oil and gas production, leading to a Commission of Inquiry into the event.

During the Commission the aspirational statements of one organisation was used against an individual. The criticism was that a contractor had removed a piece of safety critical hardware, but not replaced it, and had not been directed by the relevant individual to replace it.

There was some discussion about a presentation provided by the organisation, and that resulted in the following exchange.

Q: All right. If the operator could go to page 0004 of this document, that overhead, which is part of the induction training of drilling supervisors, is entitled “Standards”. Do you see that?

A: Yes.

Q: If you could read what is said there, you would agree it captures, if you like, a profound truth?

A: Yes.

Q: Do you agree that that is a truth not simply applicable to drilling supervisors but also applicable to PTT management onshore?

A: Yes.

Q: I want to suggest to you, sir, that your decision not to instruct Mr O’Shea or Mr Wishart to reinstall the 9-5/8″ PCC represents a very significant departure from what is described on that screen.

A: Yes, I can concede that.

Q: Without wishing to labour the point, your decision not to insist upon the reinstallation of the 9-5/8″ PCC was a failure in both leadership and management on your part?

A: Yes, that’s what it seems now.

Q: With respect, sir, I’m suggesting to you that, faced with the circumstances you were, your deference, as it were, to not treading on the toes of the rig personnel and insisting on the reinstallation was, at that point in time, a failure in leadership and management on your part.

A: I will accept that.

How many of these untested platitudes infect organisations, waiting for the opportunity to expose the business to ridicule and criticism?

Or consider if you will, the following scenario. An employee is dismissed for breaching mobile phone requirements when his mobile phone was found in the cabin of the truck he had been operating.

The employee bought an unfair dismissal claim and the presiding tribunal found that there was a valid reason to terminate his employment. However, the tribunal also found that the termination was unfair for several procedural reasons. In part, the tribunal relied on the level of training and information that the employee had been provided about the relevant procedure.

The training documentation provided did not clearly demonstrate that employees were trained in this new procedure and signed accordingly, or that it was given a significant roll-out to employees commensurate with their ‘zero tolerance’ attitude to incidents of breaches, given how this case has been pursued (my emphasis added).

If you are going to have a “Zero” aspiration, that has to be reflected in your business practices. It seldom is.

What I think these examples illustrate is an inherent weakness in the way health and safety is managed. We, as an industry, are overwhelmingly concerned with “how” we manage health and safety risks without paying anything like enough attention to whether the “how” works.

Do all of our aspirations and activities actually manage health and safety risks, or are we just keep keeping people busy or worse, wasting their time? As importantly, how do we know our initiatives are not part of the problem?

BP’s corporate management mandated numerous initiatives that applied to the U.S. refineries and that, while well-intentioned, have  overloaded personnel at BP’s U.S. refineries. This “initiative overload” may have undermined process safety performance at the U.S. refineries. (The Report of the BP US Refineries Independent Safety Review Panel (Baker Panel Review), page xii).

overloaded personnel at BP’s U.S. refineries. This “initiative overload” may have undermined process safety performance at the U.S. refineries. (The Report of the BP US Refineries Independent Safety Review Panel (Baker Panel Review), page xii).

There is no doubt that safety is not the only management discipline that suffers from these deficiencies: “style over substance” and “window dressing”. But if we claim the high moral ground of protecting human health and life, then perhaps the onus on us to show what we do works, is also higher.

My social media feeds have been abuzz recently following the release of Safe Work Australia’s report, Measuring and Reporting on Work Health & Safety. In part (or perhaps wholly) it is my fault for suggesting the report focussed on activity over assurance and could be problematic in that regard. (see for example LinkedIn, Measuring and Reporting on Work Health & Safety, Everything is Green: The delusion of health and safety reporting).

In several comments and emails, I have been asked to provide some “practical” examples. While it is difficult to provide something that will satisfy everyone, below I offer a few questions that might be useful to interrogate the efficacy of health and safety reporting in your organisation.

I would preface the example below with an observation on due diligence.

Despite what several commentators and marketing campaigns might have you believe, due diligence cannot be satisfied with a checklist, or by attending a WHS training session. The concept of due diligence existed long before WHS legislation, and it has been examined by courts and tribunal in many areas of business. One of the underpinning concepts of due diligence is “independent thought”.

It is incumbent on an individual who is charged with exercising due diligence to exercise a level of independence to understand the “thing” they are required to be diligent about. If that thing is safety, due diligence requires more than passively accepting a monthly WHS report. Due diligence requires independent thought and challenge to understand what you need to know about health and safety and whether the report is informing you about what you need to know.

So, in the spirit of that inquiry, what questions might you ask?

What is the purpose of health and safety reporting?

It might seem trite, but I think it is a legitimate question to start with. After all, if we do not start with a purpose, how to we judge effectiveness?

To many, the purpose of health and safety reporting might seem obvious, but if the history of workplace health and safety has taught us nothing else in the last 30 years, it has taught us about the dangers of assumptions. Do not assume to know the purpose of anything in health and safety – actually know the purpose and test against that purpose.

In many organisations, health and safety reporting is sold as a legal requirement, so in keeping with that theme, perhaps the purpose of health and safety reporting might be

To demonstrate the extent to which our health and safety risks are managed so far as reasonably practicable.

But before the comments start flowing about legal expectations being our minimum standards (sigh!), perhaps we can agree on something like:

To demonstrate the extent to which our health and safety risks are managed.

For those of you who aspire to “zero”, I will leave it to you to come up with your own purpose statement for health and safety reporting. Good luck.

What is the purpose and relevance of an element of health and safety reporting?

Health and safety reports might be filled with all sorts of statements and data. But what purpose do they serve?

A very popular health and safety reporting metric is the number (or percentage) of corrective actions closed out following an incident investigation.

On its face, that statistic is nothing more than a measure of activity – how many things have been done against how many things should have been done. On its face, and at its highest, it might be a measure of “operating discipline” – we are good at doing the things we said we would.

But if the purpose of health and safety reporting is to demonstrate the extent to which our health and safety risks are managed, it does not seem to add much value at all.

Another way to think about a statistical set of action items being closed out is to consider them as an indicator of the effectiveness of incident investigations. After all, the quality of incident investigations is very important to the overall quality of health and safety management and something that inquiries are likely to look at in the event of an accident (See for example Everything is Green: The delusion of health and safety reporting)

Perhaps if people had spent more time asking this question about injury rate data over the past 25 years, it would not have pride of place in safety management today.

What assumption do we have to make if an element of health and safety reporting is going to have value?

If we argue that the number (or percentage) of corrective actions closed out following an incident investigation tells us something about the quality of incident investigations, what assumptions do we have to make?

If 100% of corrective actions from incident investigations have been closed out, and I have a sense of comfort from that, I am making several assumptions. I am making assumptions:

None of these issues are revealed by the number (or percentage) of corrective actions closed out following an incident investigation.

Indeed, if a health and safety report could show 100% of corrective actions from incident investigations have been closed out without any of the assumptions above being true.

And if these assumptions are not valid, and if a major accident happens, and if it is found that incident have never been properly investigated[1], how can it be said that an organisation and its management was serious about health and safety and exercising due diligence?

I have always believed, at its core, health and safety management is about controlling health and safety hazards.

To some extent, I do not care how organisations say they manage health and safety – safety 1, safety 2, safety differently, visible felt leadership, rules, procedures, prescription, discretion, people are the problem, people are the solution etc., etc., etc. – prove to me that it works. Prove to me that what you do controls the health and safety hazards in your business.

If two people die in an electrical incident at your workplace, nobody cares what your last safety culture survey reveals. You need to demonstrate how the risk of electrocution was managed in your organisation, and whether it was managed effectively.

No one cares what your TRIFR rate is, no one cares how many action items have been closed out, no one cares how many safety interactions your managers have, no one cares how many hazards have been reported, no one cares how many pre-start meetings you have conducted ….

The relevant issue is whether health and safety hazards have been effectively managed.

The things we do in the name of health and safety only matter to the extent that they have a role to play in managing health and safety hazards.

If the number of action items closed out after an incident investigation is important to how hazards are managed, we should be able to explain how and demonstrate the relationship.

Health and safety reporting only matters if it gives us an insight into how well we manage health and safety.

What does your health and safety reporting really tell you?

[1] See for example the Royal Commission into the Pike River tragedy.

I approach this article with some trepidation.

I was recently sent a copy of Safe Work Australia’s report, Measuring and Reporting on Work Health & Safety, and subsequently saw a post on LinkedIn dealing with the same. I made some observations on the report in response to the original post which drew the ire of some commentators (although I may be overstating it and I apologise in advance if I have), but I did promise a more fulsome response, and in the spirit of a heartfelt desire to contribute to the improvement of health and safety in Australia – here it is.

I want to start by saying, that I have the utmost respect for the authors of the report and nothing is intended to diminish the work they have produced. I also accept that I am writing from a perspective heavily influenced by my engagement with health and safety through the legal process.

I also need to emphasise that I am not dismissing what is said in the report, nor saying that some of the structures and processes proposed by the report are not valid and valuable. But I do think the emphasis in the report on numerical and graphical information has the potential to blind organisations to the effectiveness of crucial systems.

I also want to say that I have witnessed over many years – and many fatalities – organisations that can point to health and safety accreditations, health and safety awards, good personal injury rate data, good audit scores and “traffic lights” all in the green. At the same time, a serious accident or workplace fatalities exposes that the same “good” safety management systems are riddled with systemic failure – long term systemic departures from the requirements of the system that had not been picked up by any of the health and safety measures or performance indicators.

I am not sure how many ways I can express my frustration when executive leadership hold a sincere belief that they have excellent safety management systems in place, only to realise that those systems do not even begin to stand up to the level of scrutiny they come under in a serious legal process.

In my view, there is a clarity to health and safety assurance that has been borne out in every major accident enquiry, a clarity that was overlooked by the drafters of WHS Legislation and a clarity which is all too often overlooked when it comes to developing assurance programs. With the greatest respect, possible to the authors of this report, I fear this has been overlooked again.

In my view, the report perpetuates activity over assurance, and reinforces that assumptions can be drawn from the measure of activity when those assumptions are simply not valid.

Before I expand on these issues, I want to draw attention to another point in the report. At page 38 the report states:

“Each injury represents a breach of the duty to ensure WHS”

To the extent that this comment is meant to represent in some way the “legal” duty, I must take issue with it. There is no duty to prevent all injuries, and injury does not represent, in and of itself, a breach of any duty to “ensure WHS”. The Full Court of the Western Australia Supreme Court made this clear in Laing O’Rourke (BMC) Pty Ltd v Kiwin [2011] WASCA 117 [31], citing with approval the Victorian decision, Holmes v RE Spence & Co Pty Ltd (1992) 5 VIR 119, 123 – 124:

“The Act does not require employers to ensure that accidents never happen. It requires them to take such steps as are practicable to provide and maintain a safe working environment.”

But to return to the main point of this article.

In my view, the objects of health and safety assurance can best be understood from comments of the Pike River Royal Commission:

“The statistical information provided to the board on health and safety comprised mainly personal injury rates and time lost through accidents … The information gave the board some insight but was not much help in assessing the risks of a catastrophic event faced by high hazard industries. … The board appears to have received no information proving the effectiveness of crucial systems such as gas monitoring and ventilation.”

I have written about this recently, and do not want to repeat those observations again (See: Everything is Green: The delusion of health and safety reporting), so let me try and explain this in another way.

Whenever I run obligations training for supervisors and managers we inevitably come to the question of JHAs – and I am assuming that readers will be familiar with that “tool” so will not explain it further.

I then ask a question about how important people think the JHA is. On a scale of 1 to 10, with 1 being the least important and 10 being the most, how important is the JHA?

Inevitably, the group settles on a score of somewhere between 8 and 10. They all agree that the JHA is “critically important” to managing health and safety risk in their business. They all agree that every high hazard activity they undertake requires a JHA.

I then ask, what is the purpose of the JHA. Almost universally groups agree that the purpose of the JHA is something like:

So, my question is, if the JHA is a “crucial system” or “critically important” and a key tool for managing every high-risk hazard in the workplace, is it unreasonable to expect that the organisation would have some overarching view about whether the JHA is achieving its purpose?

They agree it is not unreasonable, but such a view does not exist.

I think the same question could be asked of every other potentially crucial safety management system including contractor safety management, training and competence, supervision, risk assessments and so on. If we look again to the comments in the Pike River Royal Commission, we can see how important these system elements are:

“Ultimately, the worth of a system depends on whether health and safety is taken seriously by everyone throughout an organisation; that it is accorded the attention that the Health and Safety in Employment Act 1992 demands. Problems in relation to risk assessment, incident investigation, information evaluation and reporting, among others, indicate to the commission that health and safety management was not taken seriously enough at Pike.”

But equally, the same question can be asked of high-risk “hazards” – working at heights, fatigue, psychological wellbeing etc.

What is the process to manage the hazard, and does it achieve the purpose it was designed to achieve?

The fact that I have 100% compliance with closing out corrective actions tells me no more about the effectiveness of my crucial systems than the absence of accidents.

The risk of performance measures that are really measures of activity is tha they can create an illusion of safety. The fact that we have 100% compliance with JHA training, a JHA was done every time it was required to be done, or that a supervisor signed off every JHA that was required to be signed off – these are all measures of activity, they do not tell us whether the JHA process has achieved its intended purpose.

So, what might a different type of “assurance” look like?

First, it would make a very conscious decision about the crucial systems or critical risks in the organisation and focus on those. Before I get called out for ignoring everything else, I do not advocate ignoring everything else – by all means, continue to use numerical and similar statistical measures for the bulk of your safety, but when you want to know that something works – you want to prove the effectiveness of your crucial systems – make a conscious decision to focus on them.

I thought that the JHA process was a crucial system, I would want to know how that process was supposed to work? If it is “crucial”, I should understand it to some extent.

I would want a system of reporting that told me whether the process was being managed the way it was supposed to be. And whether it worked. I would like to know, for example:

I would also want to know what triggers were in place to review the quality of the JHA process – was our documented process a good process? Have we ever reviewed it internally? Do we ever get it reviewed externally? Are there any triggers for us to review our process and was it reviewed during the reporting period – if we get alerted to a case where an organisation was prosecuted for failing to implement its JHA process, does that cause us to go and do extra checks of our systems?

We could ask the same questions about our JHA training.

I would want someone to validate the reporting. If I am being told that our JHA process is working well – that it is achieving the purpose it was designed for – I would like someone (from time to time) to validate that. To tell me, “Greg, I have gone and looked at operations and I am comfortable that what you are being told about JHAs is accurate. You can trust that information – and this is why …”.

As part of my personal due dilligence, if I thought JHA were crucial, when I went into the field, that is what I would check too. I would validate the reporting for myself.

I would want some red flags – most importantly, I would want a mandatory term of reference in every investigation requiring the JHA process to be reviewed for every incident – not whether the JHA for the job was a good JHA, but whether our JHA process achieved its purpose in this case, and if not, why not.

If my reporting is telling me that the JHA process is good, but all my incidents are showing that the process did not achieve its intended purpose, then we may have systemic issues that need to be addressed.

I would want to create as many touch points as possible with this crucial system to understand if it was achieving the purpose it was intended to achieve.

My overarching concern, personally and professionally, is to structure processes to ensure that organisations can prove the effectiveness of their crucial systems. I have had to sit in too many little conference rooms, with too many managers who have audits, accreditations, awards and health and safety reports that made them think everything was OK when they have a dead body to deal with.

I appreciate the attraction of traffic lights and graphs. I understand the desire to find statistical and numerical measures to assure safety.

I just do not think they achieve the outcomes we ascribe to them.

They do not prove the effectiveness of crucial systems.

I do not think that there is any serious view suggesting that “leadership” is not an important, if not the most important driver of safety performance. One of the main findings from a 2002 review of Safety Culture was:

… management was the key influence of an organisation’s safety culture. A review of the safety climate literature revealed that employees’ perceptions of management’s attitudes and behaviours towards safety, production and issues such as planning, discipline etc. was the most useful measurement of an organisation’s safety climate. The research indicated that different levels of management may influence health and safety in different ways, for example managers through communication and supervisors by how fairly they interact with workers (Thompson, 1998). Thus, the key area for any intervention of an organisation’s health and safety policy should be management’s commitment and actions towards safety (Safety Culture: A review of the literature).

In the wake of findings like these, and numerous others, it is unsurprising that safety leadership often dominates discussions about safety management.

But are there conversations about safety leadership that we are not having and should be?

To my mind, the hard work in health and safety management is understanding if, or the extent to which, health and safety risks in our business are being controlled. All too often, however, in my experience “leadership” is an excuse to avoid the hard work of health and safety management.

The “psychology” (and I use that term as a complete layperson) of safety leadership seems to be that if I can convince my workforce that I genuinely care for them and that safety is genuinely important, then safety will take care of itself.

If I “care“, if I am a “safety leader” I do not need to do the hard work to critically challenge incident investigations, I do not need to analyse, understand and challenge audits. If I am a “safety leader” then I can accept declining personal injury rates and green traffic lights on my corporate scorecard as evidence that my safety management system is working, without ever having to challenge the assumptions that underpinned that information. Assumptions that have been shown time and again to be wrong.

This is the same discourse that threaded its way through safety culture: It doesn’t matter how bad our management systems are because we have a good “culture“. It is also the same discourse that is starting to creep into the next wave of safety thinking, concepts like “safety differently” and “appreciative enquiry“.

I make no comment on the efficacy of leadership, culture, safety differently, appreciative enquiry or whatever the next trend will be but I do question where, in any of these concepts, we do the hard work of confirming that our risks are being controlled.

I recall many years ago reviewing a matter where a worker sent a hazardous substance through the internal mail using a yellow into office envelope (back when they existed). The worker broke every one of the organisations procedures and protocols for managing hazardous substances, yet the organisation viewed this dangerous event as a triumph of their “culture“, because the worker “cared“.

The twisted logic where organisations use leadership or culture to wallpaper over the cracks of ineffective safety management systems, and actively avoid the hard work of understanding if their risks are being controlled, is very often bought into stark relief following a disaster.

The next time you are in a meeting discussing safety management listen to see if leadership or culture is being used as an avoidance strategy. Are the difficult topics such as improving the quality of incident investigation or clarifying complex and bureaucratic safety management systems or improving risk assessments bypassed with comments like:

we just need to get out and be seen more

or

we just need to spend more time in the field talking to the blokes

Is this leadership or an excuse to avoid the hard work?

Over and above avoiding what really needs to be done, is it possible that the things we do in the name of “leadership” have the potential to actively undermine safety in our organisations?

Whatever your “leadership” objective might be, whether it is to demonstrate commitment, to understand the work being performed in your organisation, to appreciate what might be preventing people from complying with safety procedures or any other objective, how do you know that your actions in the name of leadership are achieving those objectives? Because for all your good intentions there is a real risk that your presence in the field talking about safety might have the opposite effect. It might promote cynicism amongst your workforce, it might disengage them from your safety message.

You may be seen as a leader whose only concern is to cover their own backside and who obsesses over safety issues important to you, without really listening to the concerns of the workforce.

How do you know if your safety leadership works?

I think that much of what is done in the name of safety and health has, consciously or unconsciously, devolved into “window dressing“. Much of what we do is held up to the public or to our workforce as evidence of our commitment to safety, yet the substantive hard work necessary to understand if our health and safety risks are being managed remains undone – the façade of health and safety management is attractive but the building is crumbling.

Safety leadership and related concepts of care and culture have a place. More than that, they are critically important. But they are not buzzwords to be lightly tossed around and as a critical process, leadership deserves the same level of scrutiny and analysis as any of your other critical processes.

Today (29 October 2016) the ABC had an article on the ongoing coverage of the tragic loss of lives at Dreamworld in Queensland.

I have commented before about the disconnect between the loss of life in this workplace accident and the near weekly loss of life in Australian workplaces that the coverage of this incident highlights. That disconnect was underscored by a picture of the Federal Opposition Leader, Bill Shorten, laying flowers outside Dreamworld. I do not begrudge Mr Shorten the opportunity to express his condolences (or advance his political position depending on your level of cynicism), but I cannot recall too many times political leaders have given similar public displays of solidarity when people die at our construction, mining, agricultural or any other workplaces.

But what has prompted this article is the simplistic, reactive, leaderless response that politicians trot out in the face of these types of events.

The ABC Article reports Queensland Premier Annastacia Palaszczuk as saying:

“It is simply not enough for us to be compliant with our current laws, we need to be sure our laws keep pace with international research and new technologies,”

“The audit will also consider whether existing penalties are sufficient to act as deterrents, and whether these should be strengthened to contain provisions relating to gross negligence causing death.

“Because we all know how important workplace safety is and how important it is to have strong deterrents.

“That’s why Queensland has the best record in Australia at prosecuting employers for negligence – and we are now examining current regulations to see if there are any further measures we can take to discourage unsafe practices.”

The idea that we “should not be compliant with our current laws” is both a nonsense and a failure of policy makers to properly accept the findings of the Robens Report published in the mid-1970’s. The reason our laws cannot keep pace with “international research and new technologies”, is because governments continue to insist on producing highly prescriptive suites of regulation which in most cases are adopted by organisations as the benchmark for “reasonably practicable”.

For most businesses, particularly small and medium-sized businesses, technical compliance with regulation is the high-water mark of safety management – an approach reinforced by the “checkbox” compliance mentality of many regulators.

WHS legislation is a leading example of this failure of policy, in so far as it increased the number of regulations in most of the jurisdictions where it has been implemented.

Flexible, innovative safety management requires a regulatory framework that promotes it, not limits or discourages it. How can a regulator have any credibility when it calls on industry to keep pace with “international research”, when it continues to define safety performance through the publication of lost time and other lag injury rates?

Ms Palaszczuk then adopts the standard “tough on safety” call to arms, without taking the time to recognise inherent contradictions in what she is saying. She boasts that “Queensland has the best record in Australia at prosecuting employers for negligence”, but hints at tougher penalties still.

If the considerable penalties under the WHS Legislation and the “best record” of prosecuting employers are not a sufficient deterrent, why would “tougher” and “better” be any different?

I have written about these types of matters before, and would just ask that before policymakers go charging off in pursuit of higher penalties and more prosecutions, we stop and take the time to see if this tragedy can provide the opportunity lost during harmonisation and introduction of WHS legislation.

That lost opportunity was a chance to stop and consider the way that we regulate and manage health and safety in this country.

And can we start with the question of whether criminalising health and safety breaches and managing safety through a culture of fear driven by high fines and penalties is the best way to achieve the safety outcomes we want?

What is the evidence proving high penalties and prosecutions improve safety outcomes?

Are there ways that we can regulate safety to provide significant deterrents and consequences for people who disregard health and safety in the workplace, but at the same time foster a culture of openness, sharing and a willingness to learn and improve?

Can we redirect the time, money, expertise and resources that are poured into enforcement, prosecution and defending legal proceedings in a way that adds genuine value as opposed to headline value?

This is a chance to stop and think. This is a chance for the health and safety industry to stand up, intervene and take a leadership role in health and safety.

If we do not, the intellectual vacuum will continue to be filled by the historical approaches that have brought us to where we are today.

In August 2016, I wrote a WHS Update about the High Court decision, Deal v Father Pius Kodakkathanath [2016] HCA 31 which considered the legal test of Reasonably Practicable in the context of Australian health and safety legislation. Shortly after that, one of my connections on Linkedin posted an article about Reasonably Practicable. The article offered an engineering perspective on “As Low as Reasonably Practicable” (ALARP), stating:

… recent developments in Australian workplace health and safety law place proactive responsibilities on senior personnel in organisations, so they must be fully informed to make proper decisions

This sentiment seemed similar to an earlier engineering publication which argued that ALARP and “So Far as is Reasonably Practicable” (SFARP) were different and that this difference was, in part a least as result of “harmonised”, WHS legislation.

In both cases, I believed the articles were misaligned with the legal construct of Reasonably Practicable and misrepresented that there had been a change in the legal test of Reasonably Practicable prompted by changes to WHS legislation.

This background caused me to reflect again on the notion of Reasonably Practicable and what it means in the context of legal obligations for health and safety.

To start, I do take issue with the suggestion that changes to WHS legislation have resulted in a shift in what Reasonably Practicable means. The basis of this idea seems to be an apparent change in terminology from ALARP to SFARP.

The term SFARP was in place in health and safety legislation before the introduction of WHS and jurisdictions that have not adopted WHS legislation still use the term. For example, the primary obligations under the Victorian Occupational Health and Safety Act 2004 are set out in section 20, and state:

To avoid doubt, a duty imposed on a person by this Part or the regulations to ensure, so far as is reasonably practicable, health and safety requires the person …

Indeed, the architects of WHS legislation[1] specifically retained the term Reasonably Practicable because it was a common and well-understood term in the context of Australian health and safety legislation:

5.51 Reasonably practicable is currently defined or explained in a number of jurisdictions. The definitions are generally consistent, with some containing more matters to be considered than others. The definitions ‘are consistent with the long settled interpretation by courts, ‘in Australia and elsewhere.

5.52 The provision of the Vic Act relating to reasonably practicable was often referred to in submissions (including those of governments) and consultations as either a preferred approach or a basis for a definition of reasonably practicable.

5.53 We recommend that a definition or section explaining the application of reasonably practicable be modelled on the Victorian provision. We consider that, with some modification, it most closely conforms to what would be suitable for the model Act. [My emphasis added]

In my view, it is unarguable that the concept of Reasonably Practicable has been well-settled in Australian law for a considerable period, and the concept has not changed with the introduction of WHS legislation.

If we accept that Reasonably Practicable has been consistently applied in Australia for some time, the next question is, what does it mean?

Reasonably Practicable is a defined term in most health and safety legislation in Australia. Section 20(2) of the Victorian Occupational Health and Safety Act 2004, for example, states:

(2) To avoid doubt, for the purposes of this Part and the regulations, regard must be had to the following matters in determining what is (or was at a particular time) reasonably practicable in relation to ensuring health and safety—

(a) the likelihood of the hazard or risk concerned eventuating;

(b) the degree of harm that would result if the hazard or risk eventuated;

(c) what the person concerned knows, or ought reasonably to know, about the hazard or risk and any ways of eliminating or reducing the hazard or risk;

(d) the availability and suitability of ways to eliminate or reduce the hazard or risk;

(e) the cost of eliminating or reducing the hazard or risk.

In the High Court decision, Slivak v Lurgi (Australia) Pty Ltd [2001] HCA 6, Justice Gaudron described Reasonably Practicable as follows:

The words “reasonably practicable” have, somewhat surprisingly, been the subject of much judicial consideration. It is surprising because the words “reasonably practicable” are ordinary words bearing their ordinary meaning. And the question whether a measure is or is not reasonably practicable is one which requires no more than the making of a value judgment in the light of all the facts. Nevertheless, three general propositions are to be discerned from the decided cases:

Another High Court decision, Baiada Poultry Pty Ltd v The Queen [2012] HCA 14, emphasised similar ideas.

The case concerned that the death of a subcontracted worker during forklift operations. Baiada was the Principal who had engaged the various contractors to perform the operations and in an earlier decision the court had concluded:

it was entirely practicable for [Baiada] to required contractors to put loading and unloading safety measures in place and to check whether those safety managers were being observed from time to time ((2011) 203 IR 396 at 410)

On appeal, the High Court framed this finding differently. They observed:

As the reasons of the majority in the Court of Appeal reveal by their reference to Baiada checking compliance with directions it gave to [the contractors], the question presented by the statutory duty “so far as is reasonably practicable” to provide and maintain a safe working environment could not be determined by reference only to Baiada having a legal right to issue instructions to its subcontractors. Showing that Baiada had the legal right to issue instructions showed only that it was possible for Baiada to take that step. It did not show that this was a step that was reasonably practicable to achieve the relevant result of providing and maintaining a safe working environment. That question required consideration not only of what steps Baiada could have taken to secure compliance but also, and critically, whether Baiada’s obligation “so far as is reasonably practicable” to provide and maintain a safe working environment obliged it: (a) to give safety instructions to its (apparently skilled and experienced) subcontractors; (b) to check whether its instructions were followed; (c) to take some step to require compliance with its instructions; or (d) to do some combination of these things or even something altogether different. These were questions which the jury would have had to decide in light of all of the evidence that had been given at trial about how the work of catching, caging, loading and transporting the chickens was done.[3] [my emphasis added]

In light of these, and other decided cases it is possible to form a practical test to consider what is Reasonably Practicable. In my view, it is necessary for an organisation to demonstrate that they:

What constitutes Proper Systems and Adequate Supervision is a judgement call that needs to be determined with regard to the risks. It requires an organisation to balance the risk against the cost, time and trouble of managing it.[4]

It is also worth noting at this point, that Reasonably Practicable is, generally speaking, an organisational obligation. It is not an individual,[5] and in particular, it is not an employee obligation.

I often see, when working with clients, safety documents required be signed by employees that the state that risks have been controlled to “ALARP”. This is not the employee’s responsibility and the extent to which an employee does or does not control the risk to ALARP does not affect an employer’s obligations.

In broad terms, it is the organisation’s (PCBU or employer) obligation to manage risks as low as, or so far as is, Reasonably Practicable. The employee obligation is to do everything “reasonable”. This includes complying with the organisation’s systems.

It is the organisation’s obligation to identify the relevant health and safety risks and define how they will be controlled, ensuring that the level of control is “Reasonably Practicable. It is the employee’s obligation to comply with the organisation’s requirements.

So, what might Reasonably Practicable look like in practice?

I recently defended a case that involved a worker who was seriously injured at work. Although the injury did not result from a fall from height, the prosecution case against my client was based on failure to meet its obligations about working at heights.

My client had, on any measure, a Proper System for managing the risk of work at heights. They had a documented working at height Standard and Procedure both of which were consistent with industry best practice and regulator guidance material. All work at height above 1.8 m required a permit to work and a JHA. The documented procedures prescribed appropriate levels of supervision and training.

In the three years before the relevant incident, my client had not had a working at height incident of any sort nor had they had a health and safety incident at all. Based on all of our investigations as part of preparing the case, there was nothing to suggest that the incident information was not legitimate.

The activity which was being performed at the time of the incident was conducted routinely, at least weekly, at the workplace.

In looking to construct a Reasonably Practicable argument to defend the case what would we be trying to do? In essence, I would be trying to establish that the incident was an aberration, a “one off departure” from an otherwise well understood, consistently applied system of work that was wholly appropriate to manage the risk of working at heights.

In practice, that would mean:

There may be other information that we would seek, but in broad terms, the information outlined above helps to build a case that there was a proper system that was effectively implemented and that:

What happened?

Rather than be able to demonstrate that the incident was a one-off departure from an otherwise effective system, the evidence revealed a complete systemic failure. While the documented system was a Proper System and complied with all relevant industry standards and guidelines, it was not implemented in practice.

Most compelling was the fact that, despite this being a weekly task, there was not a single instance of the working at height Standard and Procedure been complied with. We could not produce a single example where either the injured worker or indeed any worker who had performed the task had done so under an approved permit to work with an authorised JHA.

All of the workers gave evidence that the primary risk control tool on site was a Take 5. The Take 5 is a preliminary risk assessment tool, and only if that risk assessment scored 22 or above was a JHA required. The task in question was always assessed as 21. The requirement for a JHA, in the minds of the workforce, was never triggered and none of them understood the requirements of the Standard or Procedure.

To me, this case is entirely indicative of the fundamental failure of Reasonably Practicable in most workplaces. In the vast majority of cases that I have been involved in the last 25 years, organisations have systems that would classify as Proper Systems. They are appropriate to manage the risk that they were designed to manage.

Equally, organisations cannot demonstrate Adequate Supervision. While there may be audits, inspections, checking and checklists – there is no targeted process specifically designed to test and understand whether the systems in place to manage health and safety risks in the business are in fact implemented and are effective to manage those risks.

In my experience, most organisations spend far too much time trying to devise the “perfect” Proper System. We spend far too little time understanding what needs to be done to confirm that the System works, and then leading the confirmation process.

Reasonably Practicable has not changed.

Reasonably Practicable is not a numeric equation.

Reasonably Practicable changes over time.

Reasonably Practicable is an intellectual exercise and a judgement call to decide how an organisation will manage the health and safety risks in its business.

Reasonably Practicable requires an organisation to demonstrate that they:

What constitutes Proper Systems and Adequate Supervision is a judgement call that needs to be determined with regard to the risks. It requires an organisation to balance the risk against the cost, time and trouble of managing it.

[1] See the National Review into Model Occupational Health and Safety Laws: First Report, October 2008.

[2] Slivak v Lurgi (Australia) Pty Ltd [2001] HCA 6 [53].

[3] Baiada Poultry Pty Ltd v The Queen [2012] HCA 14 [33].

[4] See also: Safe Work NSW v Wollongong Glass P/L [2016] NSWDC 58 and Collins v State Rail Authority of New South Wales (1986) 5 NSWLR 209.

[5] There are some exceptions to this where an individual, usually a manager or statutory officeholder will be required to undertake some action that is Reasonably Practicable.

This article is a general discussion about Reasonably Practicable and related concepts. it should not be relied on, and is not intended to be specific legal advice.

Some time ago I wrote a post about the value of criminal prosecutions for safety breaches as part of effective safety management. The post is available HERE.

A discussion about the nature of “safety prosecutions” was recently held on LinkedIn following an article I posted about the acquittal of engineers involved in the Deepwater Horizon disaster in the Gulf of Mexico (see for example the CSB Report). You can see the LinkedIn discussion HERE.

Given the limited scope to expand a discussion in LinkedIn comments, I promised to write a more fulsome article, which I have attempted to do below.

The starting point for discussion about safety prosecutions is, I think, to understand what prosecutions are designed to achieve.

Inevitably in any discussion about safety prosecutions there is a multiplicity of views about what people perceive the process is designed to achieve. These include, compensation, punishment, deterrence and the opportunity to “learn lessons“.

In Australia at least, it seems unlikely that the current prosecution regime would fulfill any of these perceptions.

First, occupational safety and health prosecutions are not designed to compensate anyone. The workers compensation regime and/or civil proceedings (i.e. claims in negligence) are designed to compensate people for loss caused by workplace accidents and incidents. They are an entirely separate legal process, and compensation does not form part of the consideration of a criminal occupational safety and health prosecution.

Neither are occupational safety and health prosecutions designed as an opportunity to learn lessons. Prosecutions are typically run in relation to a very narrow set of charges and “particulars“. For example, if it is alleged that an employer failed to do everything reasonably practicable in that it failed to enforce its JHA procedure then the prosecutions about whether:

There are no lessons about what might constitute a good JHA procedure, or a good process for ensuring that the procedure is followed.

As a more practical matter, prosecutions are very limited in their ability to teach us lessons because inevitably any decisions are made several years after the event occurred. In many cases decisions are not even published so that even if there were lessons that could be learned, they are not available to us.

Theoretically, prosecutions are designed to punish wrongdoers and provide both specific and general deterrence, that is, deter the guilty party from offending again and act as a warning to all other parties not to offend in the future.

Again, the evidence is far from clear that occupational safety and health prosecutions achieve this outcome, insofar as there does not appear to be evidence that a robust prosecution regime decreases the number of health and safety incidents.

For example, the ninth edition of the Workplace Relations Ministers’ Council Comparative Performance Monitoring Report issued in February 2008 show that Victoria and Western Australia, who had the lowest rate of prosecutions resulting in conviction at the time, also had the lowest incidence rates of injury and disease and enjoy the greatest reduction in average workers’ compensation premium rates over the three years to June 2006.

Of course, as with all statistical information, there could be any number of reasons for this finding. My point is not whether the finding is right or wrong. My point is we do not have the evidence and we have not had the discussion.

Although, the limited efficacy of criminal proceeding should not come as a surprise. The Robens Report published in the 1970s, an on which modern Australian health and safety legislation is based, identified:

The character of criminal proceedings against employers is inappropriate to the majority of situations which arise and the processes involved make little positive contribution towards the real objective of improving future standards and performance.

One of the ironies inherent in this discussion is that it is often the safety industry that is at the vanguard of the charge calling for significant prosecutions and directors to be sent to jail in the event of workplace accidents. This is the same industry that thrives on selling poor quality incident investigation processes based on a “no blame” culture.

It is interesting that the industry can say on one hand that we can only achieve effective safety outcomes where we don’t seek to blame, but that if something serious happens (i.e. someone dies) then there must be someone to blame and they should be prosecuted with the full force and effect of the law.

To me, this discussion is another example of the opportunity lost during the “harmonisation” of Australia’s health and safety legislation.

Rather than an informed discussion about how health and safety legislation could achieve the best health and safety outcomes, there seemed to be a broad assumption – not argued at best, unproven at worst – that, notwithstanding 20 or more years of history, prosecutions, large fines and personal liability was the best approach to improving health and safety outcomes in Australia.

I have personal views about what might be a better process to deal with those workplace accidents that are serious enough to warrant a “public response”, but this article is not the place to describe them. Rather, I hope that this article might prompt the safety industry to think more carefully about what it wants from its regulations and regulator and not use every workplace tragedy as an opportunity to promote the language of blame as an appropriate response to workplace accidents.

We cannot continue to promote safety using the message of fear and blame and then be surprised by how difficult it is to shift culture in an organisation.

Effective from 7 December 2015, Safe Work Australia has published 10 guides and information sheets on managing the risks associated with inspecting, maintaining and operating cranes, and plant that can be used as a crane and quick hitches for earthmoving machinery. This move is part of an agreement by SWA members in 2014 to replace the draft model WHS Code of Practice for cranes with guidance material.

You can access the SWA “cranes guidance material” page HERE.

This approach does create some interesting jurisdictional issues. For example, New South Wales which operates under the WHS legislation has an approved code of practice for managing the risks of falls at a workplace – which means it has a specific legislative standing, different from guidance material. This code of practice includes a section on “work boxes“, but it has different information from the material set out in the SWA guide on “crane lifted work boxes“.

For example, the SWA guide states that work boxes should:

However, none of these points are mentioned in the approved code of practice.

A common failing of safety management systems is the level of internal inconsistency that develops as layers of safety management processor built up over time. It seems that the regulator is not immune from this problem.